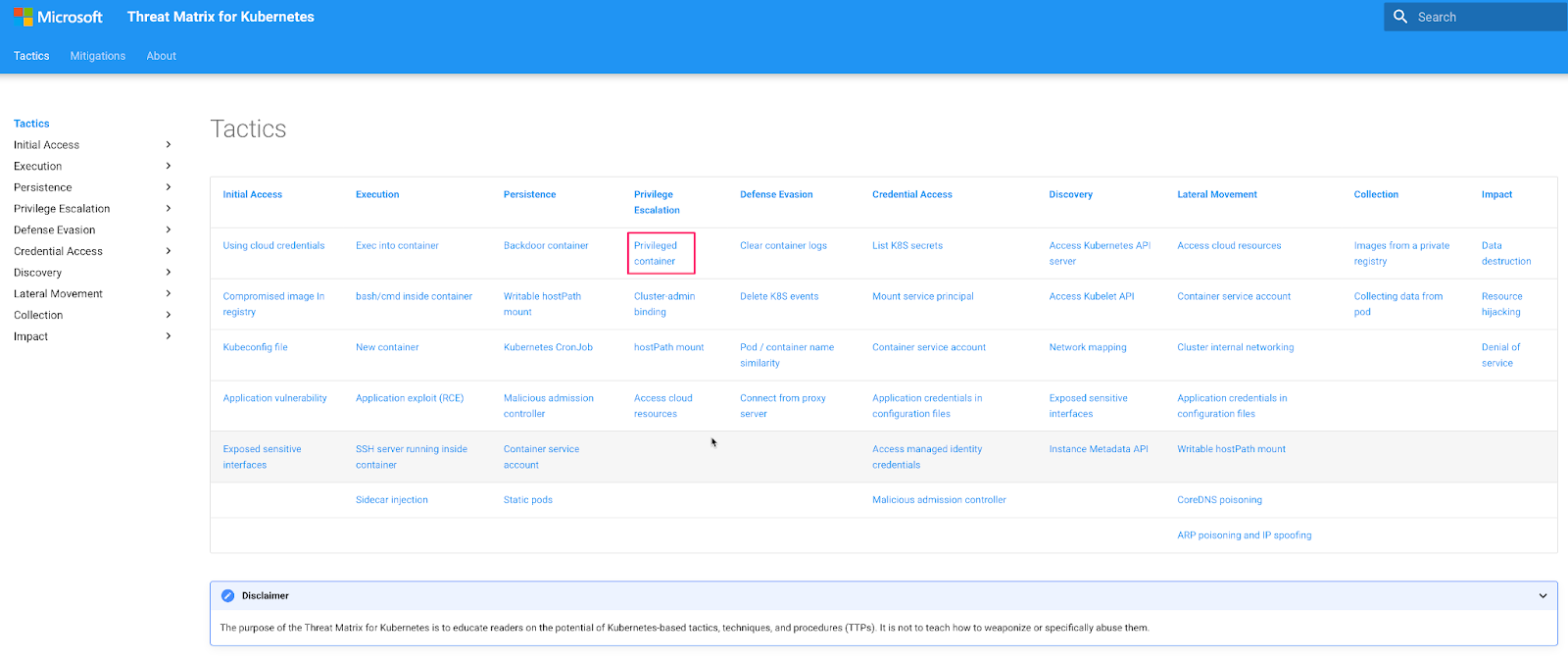

Privileged containers are a special type of container that run with elevated privileges and have direct access to the host system, including things like hardware devices and network interfaces - effectively already only a small step away from the host system itself. Privileged containers are powerful, and therefore are highly targeted by attackers, and a key part of many attacks as highlighted in the Kubernetes threat matrix maintained by Microsoft. While there are valid use cases for these containers, such as network/storage drivers, security, and observability, their use should be carefully restricted to reduce the attack surface.

Microsoft Kubernetes Threat Matrix

What are container escapes?

Container escapes, or the act of gaining access to the underlying host from inside a container, are a constant reminder that containerization alone is not a sufficient security boundary in most cases. Escaping a container enables lateral movement to the host system and bypasses other controls that may be in place. For example, a container escape enables a Kubernetes namespace breakout when pods/containers from different namespaces are scheduled on the same node.

How does eBPF help identify container escapes?

eBPF, which is built into modern Linux kernels, provides real-time data about what’s happening in the container runtime, as well as the node itself. This data can be collected, parsed, and assembled into meaningful groupings of activity to identify container escape behavior, as well as what led up to that behavior, and the consequences of the escape.

In this blog, we’re going to leverage eBPF (via Spyderbat), to see what one of these container escapes actually “looks like,” from the series of Kernel events (system calls) leading up to and following a container escape or breakout.

cgroups release_agent Escape

In the original proof of concept (PoC) by Felix Wilhelm, he showed that by abusing the Linux cgroup v1 “notification on release” feature, a privileged container can write a desired command to a file that will be read and executed (as root) by the underlying host.

For our initial anatomy of this attack, we will use a variant from Vickie Li here. First up, let’s start up our privileged pod/container in our AWS EKS cluster. We’ll use a standard Ubuntu base image for this test - with the following pod spec - noting we’re running a privileged pod here:

We kubectl apply this to our K8s cluster to start the pod. Next, we exec into our running pod with kubectl exec -it test-pod-3 /bin/bash. Now we’re ready to run our variant of the exploit, which is the following:

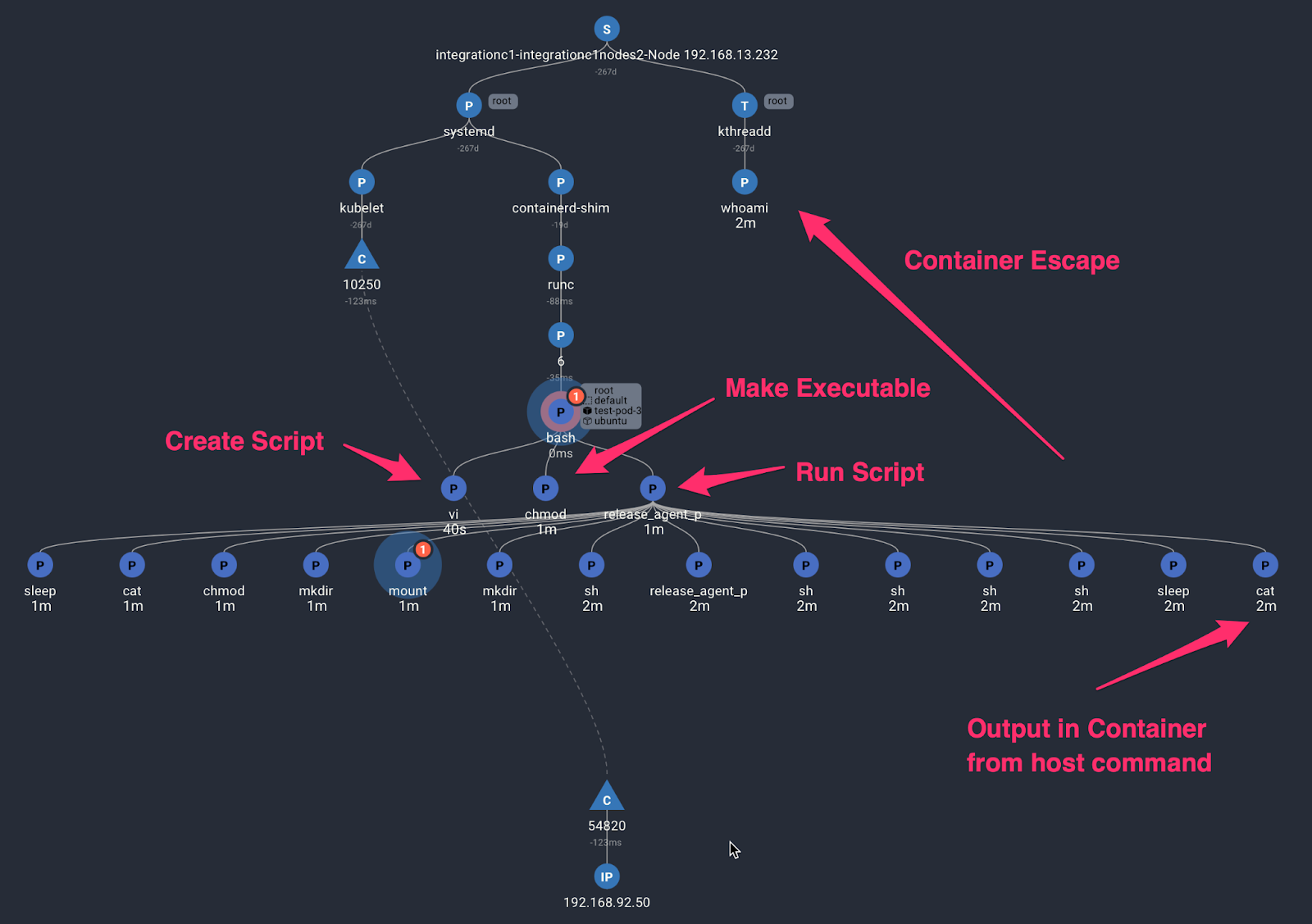

In this case, the command we’re looking to execute on the host is whoami. We can visualize this entire path easily using Spyderbat - You can explore this trace interactively here - no sign up is required.

The trace can be read from top to bottom, and from left to right in time. Through the magic of eBPF and Spyderbat’s context tracing, we see the complete set of activities related to one another. First up, we see this (Kubernetes) node was started about 272 days ago, relative to when the bash shell was launched in the container. A key benefit of the eBPF based approach here is that we can see both detailed runtime activity on the host, as well as inside the container. The blue “P” nodes represent processes executed on the hosts, whereas the green P nodes represent activity inside this specific pod/container.

The sequence of events are:

- The Ubuntu pod get started and run the “sleep” command.

- A connection (the blue “C” node) comes into the kubelet a couple of minutes later; this is the result of running our kubectl exec -it test-pod-3 /bin/bash command.

- Kubectl signals the container runtime to start a bash shell in the container.

- We see a series of commands get executed from the above sequence pretty much instantly (showing a simple copy and paste to the terminal in this case), and then a whoami command gets executed on the underlying host/system as a child of the kthreadd process/thread.

If you interact with the trace, you’ll notice that “kthreadd” has a process id (PID) of 2 - you can read more about kthreadd on this reddit post. Suffice to say, this is not typical application behavior. It is odd for user commands to be getting executed out of PID2…. as is the case with this release_agent container escape.

Kubernetes Pod, exec and escape to host.

A More Generic Variant

As pointed out by Alex Chapman here, the original PoC above relies on the use of the overlayfs container filesystem, which exposes the host file system path of container mounts to the container itself. Not all container file systems exhibit that behavior, and he shows a different approach that leverages the /proc Linux filesystem (via symbolic links), and shows specifically how the exploit can be achieved if you know the PID of any process running in the container. He demonstrates an updated PoC that basically runs a script to guess the PID of a running sleep command/process, and pass a desired command to the underlying host via the release agent. This shell script is below, but we have changed the command to get executed on the host to be whoami, similar to the previous example.

In the figures below, you can see the terminal output that results from running this script and you can follow along with an interactive trace. Similar to the previous trace, we see

- A connection into the Kubelet and a bash shell being created from kubectl exec.

- In this case, we see vi being run (where we created the script), chmod where we make it executable, and then the script itself being run with ./release_agent_pid_brute.sh. The shell script spawns a large number of child processes – more than 5000 child processes are captured by eBPF and Spyderbat in the full trace – but we have focused on the processes at the start and end of the script execution here. You can see that the commands from the script above are captured in a complete trace of activity by eBPF and Spyderbat. If you open up the interactive trace linked above, you can see that the script took around 42s to run. That's because this is the script searching through the container PID space for the sleep command, as you can see the output from running the script in the container terminal below.

Container Escape Using /proc Filesystem

And these are the commands that were executed in the container - we omitted a number of the “Checking pid X” in the output for brevity:

Spyderbat % kubectl exec -it test-pod-3 /bin/bash

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

root@test-pod-3:/# vi release_agent_pid_brute.sh

root@test-pod-3:/# chmod a+x release_agent_pid_brute.sh

root@test-pod-3:/# ./release_agent_pid_brute.sh

Checking pid 100

Checking pid 200

Checking pid 300

.

.

Checking pid 5000

Done! Output:

root

Terminal Output from the Script Inside the Container

We see that the output of whoami from the host is rendered in the terminal of the container relating to the final cat command in the script and recorded in the trace above.

Escaping non-privileged containers

Finally, in this blog, the author shows that the release agent exploit is not limited to privileged containers, but requires only that 1) we run as root inside the container; 2) we run the container with SYS_ADMIN capability; 3) the container has no AppArmor profile or generally allows the mount syscall and 4) The cgroup v1 virtual filesystem must be mounted read-write inside the container.

To demonstrate this version of the attack, we start by running the Docker container with the required parameters

docker run --rm -it --cap-add=SYS_ADMIN --security-opt apparmor=unconfined ubuntu bash

We then run the same commands from the first scenario inside this newly created container:

The trace that is captured looks almost identical to the first one we explored above, although this time, the container has been run with SYS_ADMIN capabilities and is not run as privileged.

Non-privileged container breakout

Summary

In this post, we explored three different container breakouts based on the cgroups release_agent feature for both privileged and non-privileged pods/containers. Using eBPF and Spyderbat, we’ve been able to visualize what these exploits look like at the Kernel level, by chaining together the events into traces that show the attacks both pre and post breakout. Together, they show that this remains a powerful technique for escalating privileges in container based environments, and that continuous monitoring, detection and response mechanisms must be in place to both recognize and stop such breakouts, in addition to ongoing preventative measures.

Learn more about how eBPF provides unparalleled insights for runtime security in our detailed technical whitepaper!