Cloud Native Runtime Security vs XDR

It seems that there are a number of vendors, both incumbent and new, coming to market with their version of an eXtended Detection and Response (XDR) platform. Some folks are repackaging their endpoint-based solutions into new forms and even claiming to be Cloud Detection and Response (CDR) to keep up with the times. There is even controversy over the definitions, with some in the camp saying that XDR extends from Endpoint Detection and Respond therefore requiring an endpoint component, and others defining XDR as a meta-SIEM, consuming SIEM events, and/or logs, and/or endpoint telemetry, and/or network telemetry for threat detection and response. No matter the definition, XDR platforms seek to improve threat detection accuracy and security operations productivity to combat a skills shortage and ever-increasing volume and sophistication of attacks.

Spyderbat takes the road less traveled. We are not an XDR. While it may seem odd to make a declarative statement about what Spyderbat isn’t, we feel it often helps in defining what we are. Here are four reasons why Spyderbat is not an XDR.

Reason #1: Focus

DR platforms provide a broad set of capabilities across threat detection and response orchestration; Spyderbat focuses exclusively on alert triage and investigation.

XDR platforms include a swath of capabilities for mass data collection and data lake management, threat discovery and prioritization, security orchestration and response. XDR platforms require integration with multiple products, often within the vendor’s own portfolio. There is Open XDR, referring to a set of vendors with a vendor-agnostic approach. Regardless, each has the same goal of providing a centralized console to support security analysts across the entire threat detection and response workflow.

In contrast, Spyderbat is hyper-focused on a single, yet extremely profound issue within the workflow - what we call the Detection and Response Chasm. Rather than try to solve all problems across security operations, Spyderbat focuses on one of the most challenging and time-consuming issues. Even organizations using XDR confront this chasm when their security analysts seek to understand each alert, requiring manual investigation. This means Spyderbat interoperates with XDR platforms, providing instantaneous clarity for the security analysts at the point an alert is triggered to answer:

Is this a false positive? And if not, what is the full scope of the threat?

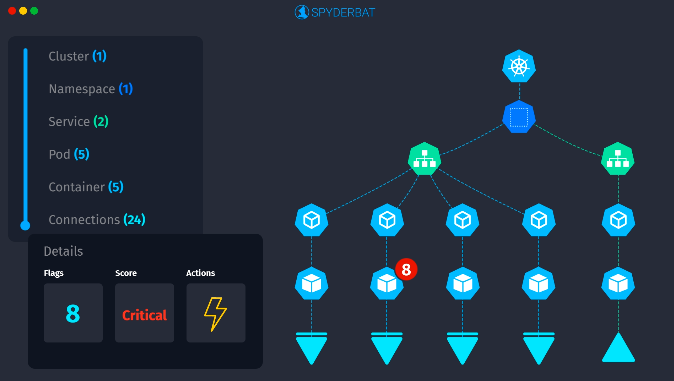

Spyderbat solves this question by generating a system-wide real-time graph plotting every interaction to its causal precedents and outcomes. By seeing the world through causality (what caused what to happen), Spyderbat brings the clarity and completeness missing in XDR’s (and other security platforms’) workflow.

Reason #2: Architecture

XDRs use a data-first model; Spyderbat takes an analytics-first approach.

To perform threat discovery from wherever it may occur, XDR wants any and all data. Log data. Endpoint data. Network data. Just gather it all to ‘see what you get’. XDR then uses a variety of analytical approaches (e.g. rules-based correlation, statistical analysis, machine learning, etc.) to make observations from the data. Performing a broad set of analytics across a broad set of data results in high degrees of false positives, creating low value work for security analysts to chase down each finding. The attempt to create a set of analytics that work out of the box across all customers with their great range of data inputs has never worked. SIEMs tried it for years. Normalization of inputs to fit into the analytics data structures has never produced reliable results. It is one of the reasons why, even with all the information we have, analysts do not trust alerts.

Spyderbat takes an analytics-first approach. This means starting with the question - what is it we want to discover? Then gather the optimal data for answering that question. This keeps data retrieval to a minimum and provides reliably accurate results, leading us to our next reason.

Reason #3 - Source Data

XDR analyzes derived data; Spyderbat uses ‘ground-truth’ data

By collecting any/all data, XDR processes data to parse into fields consumable by its analytics engines, including assigning collected data with an event category. For example, a standard data feature for machine learning is the count of authentication successes and failures by user, used to determine outliers in authentication success/failure ratios or authentication occurrences outside previously established norms. While self-learning methods have advantages from rule tuning, there are still consequences to this approach:

- Logs change. As applications/systems are upgraded, any alterations to log syntax may prevent successful parsing and event categorization. This causes any dependent analytics to fail.

- Outliers are not inherently security risks. Just because I haven’t used notepad in the last 30 days and none of my peers use notepad, means using notepad today is a security risk. Most ML and statistical analysis approaches lack security context, creating false positives.

Spyderbat’s main data source are ‘ground-truths’, the system calls between the application and the operating system. In order to access network, processing, or storage resources, all applications, even a shell, must use the operating system. By focusing on this level of interaction, Spyderbat has the luxury of a homogeneous and definitive data source. This brings reliability and accuracy.

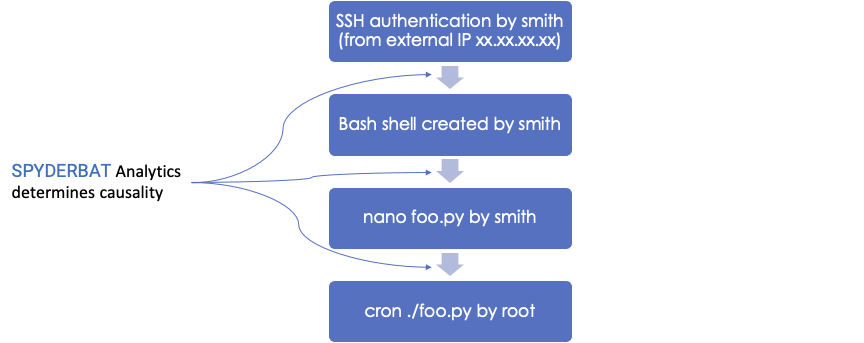

For example, when editing a file with the command ‘nano foo.py’, without parsing authentication logs, system logs, or application logs, Spyderbat unequivocally knows which file was edited, by whom, and when. Spyderbat’s analytics determine the causal connections between these events. Its not just that Spyderbat understands the who, what, when of ‘nano foo.py’, its also understood in context of what happened before (smith@gmail.com authenticated via SSH creating a bash shell owned by smith), and what happened afterwards (the file foo.py is executed by root in a cronjob).

By focusing on ground-truth and not derived data, Spyderbat captures and represents an accurate and complete causal chain of activities.

Reason #4 - Workflow

XDR uses an alert-based workflow; Spyderbat uses an incident-based approach

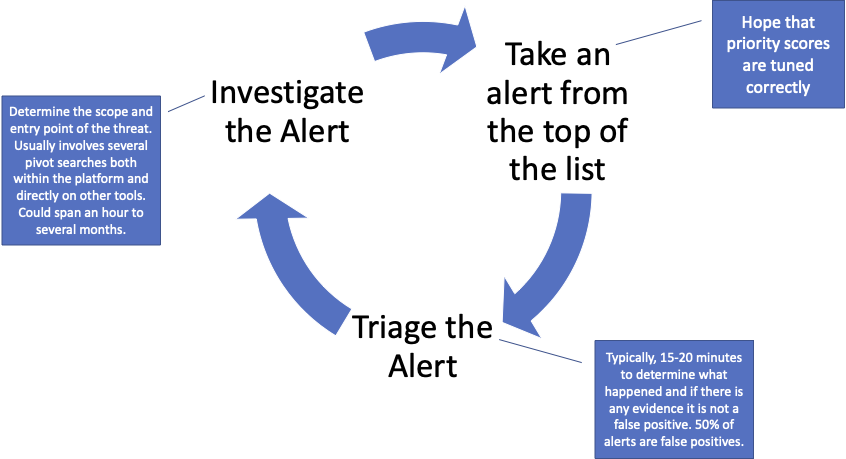

This may be the most fundamental difference between Spyderbat and other security tools. XDR consumes alerts and events from other security tools and other data sources in an attempt to generate higher-fidelity aggregated alerts. This pursuit of the ‘perfect’ alert is faulty. The general approach of collecting from a broad set of data sources and using a broad set of analytics will always generate false positives. It also continues to push an alert-based workflow for the human analyst. Alerts identify symptoms that must be investigated rather than presenting the whole story. The alert work-flow is:

There are two major issues with this approach:

- Security analysts spend a large amount of time on low-value work (chasing false positives).

- By investigating one alert at a time, there is a high risk security analysts only see one aspect of a larger threat. The endpoint got cleaned - but how did the malware get there?

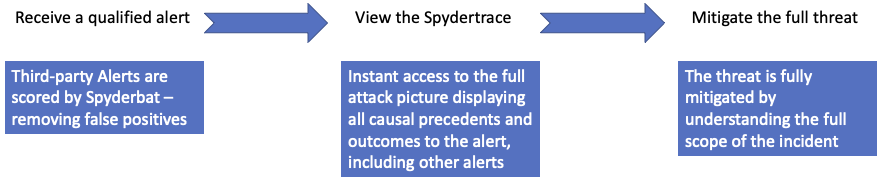

Let’s change the script. Like an XDR, Spyderbat consumes alerts from an aggregation source (e.g. XDR or SIEM) and/or from specific security tools. Unlike an XDR, Spyderbat does not use these alerts to generate new alerts. Instead, Spyderbat fuses third-party alert data to a system-wide real-time graph plotting every interaction to its causal precedents and outcomes. As a result, alerts and security events no longer exist in isolation. They are instantly connected in context and easily viewable by the analyst. In realtime, Spyderbat connects previously undetected or investigated attack steps to current alerts, including other alerts.

This changes the game.

Plotting third-party alerts to a living map of activities by their causal connections generates three outcomes:

- Identifies false positives. False positives are accurately identified by recognizing alert with absolutely no outcomes. It’s not a guess. It's not a confidence score. Literally, nothing happened.

- Recognizing false negatives. What is a false negative? This is an activity that should have been investigated and either wasn't or was dismissed as a false positive. In outcome #1 above Spyderbat labeled an alert with no causal outcome as a false positive. What if the attacker or malware is just waiting? Malware can have a random wait period to outwit security products. What if in 10-14 days after Spyderbat told you an alert was a false positive - new causal activity occurs? What happens is Spyderbat sees it, sees its causal connection to the earlier alert, and re-prioritizes the entire attack trace. The security analysts views the entire attack trace, seeing the prior activity and the causal activity connecting it to its current state.

- Capturing the full set of attack steps of true positives, including other alerts. Everything the attacker did - whether 2 seconds ago or 2 weeks ago - is captured and immediately presented by Spyderbat to the analyst. Instead of investigating a single alert and waiting on pivot search results that are analyzed manually, Spyderbat allows simultaneously investigation of all causally-related alerts for immediate interception. Instead of relying on guesswork or heroic effort analyzing logs, Spyderbat gives confidence the full attack's scope and entry point are understood for thorough mitigation.

This incident-based approach reduces effort spent on low-value work without risk of 'false negatives' coming back to bite you. It enables you to close multiple alerts simultaneously that have causal connections, even if the connections are spread across multiple benign activities, different systems and users, and long periods of time.

This is how Spyderbat instantly bridges detection to response and why fundamentally we are not an XDR.

For more on how Spyderbat creates a system-wide real-time graph of causal connections - Try Spyderbat with our free Community Edition.